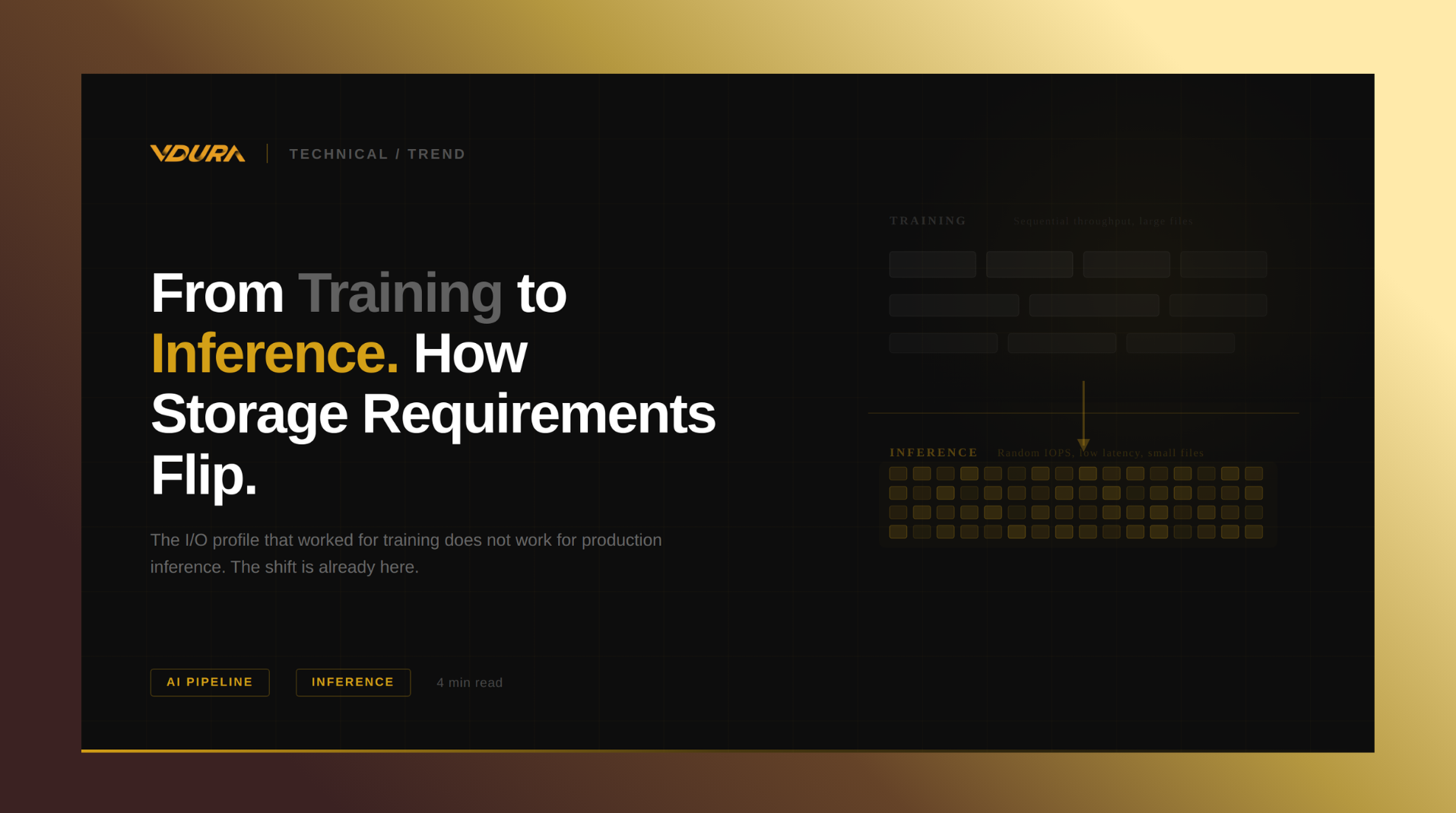

From Training to Inference: How Storage Requirements Flip When AI Goes to Production

For most of the AI infrastructure buildout, storage was optimized for one thing: feeding training runs. The playbook

For most of the AI infrastructure buildout, storage was optimized for one thing: feeding training runs. The playbook

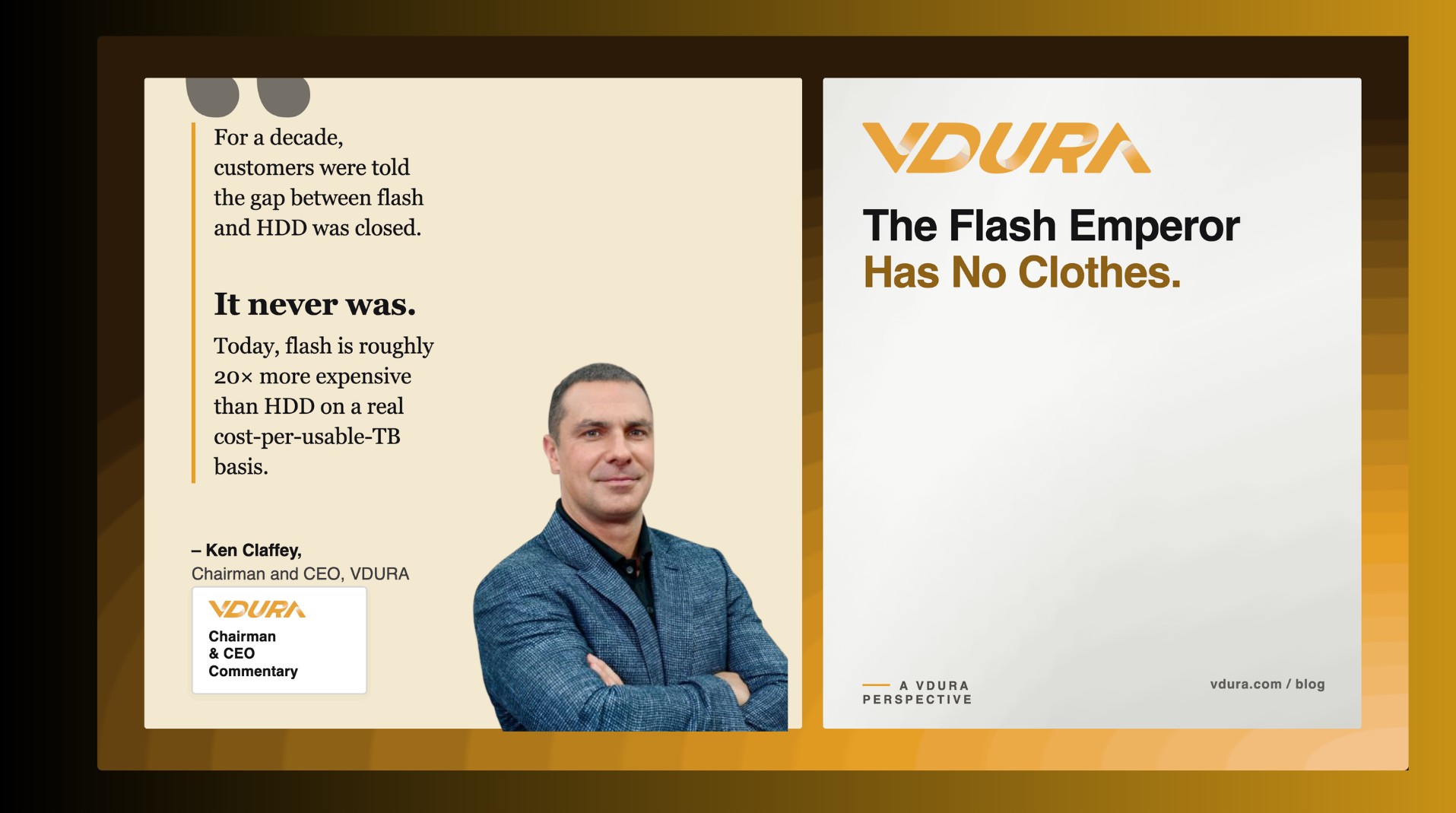

The receipts are in on every claim made in “The Flash Emperor Has No Clothes,” and a closer

Source: Block and Files : VDURA CEO Ken Claffey reckons that flash price rises and shortages have finished off

A response to Everpure (aka Pure Storage), and to a decade of all-flash economics that were never as advertised By

HDDs Aren’t Dying. They’re Disappearing Off Shelves. The most resilient storage architectures in AI infrastructure have one thing in common:

Some storage architectures silently consume 5 GB of DRAM and up to 4 CPU cores per GPU node. At 500 nodes, that’s 2.5 TB of DRAM and 2,000 cores lost to storage overhead — a hidden tax you can no longer afford.

April 7, 2026 Source: CIO –The enterprise storage market is currently experiencing unprecedented SSD price volatility driven by massive AI

NAND prices surged up to 60% in one quarter. Storage now consumes up to 30% of the infrastructure budget. The operators who planned for this are fine. The rest are doing the math.

San Jose, CA, March 16–19, 2026 NVIDIA GTC 2026, the world’s premier conference for AI, high-performance computing, and

March 6, 2026: On International Women’s Day, we honor the women propelling high-performance computing and AI forward. While these